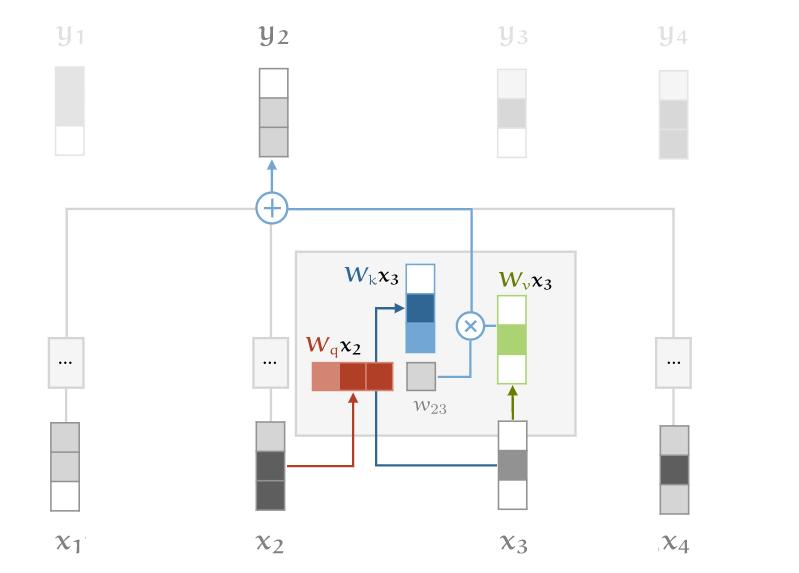

Scaled Dot Product Attention

- Vaswani et al., 2017

- Q is query, K is key V is value. Same dims

- , ,

- Softmax is sensitive to large values. Which sucks for thearchitecture

- The avg value of the dot product grows with Embedding dimension k. So scale back.

- . Vector in with all values as c

- Euclidean length is

- Generalization of Soft Attention

- Attention Alignment score