Chapter 9 - Regularization

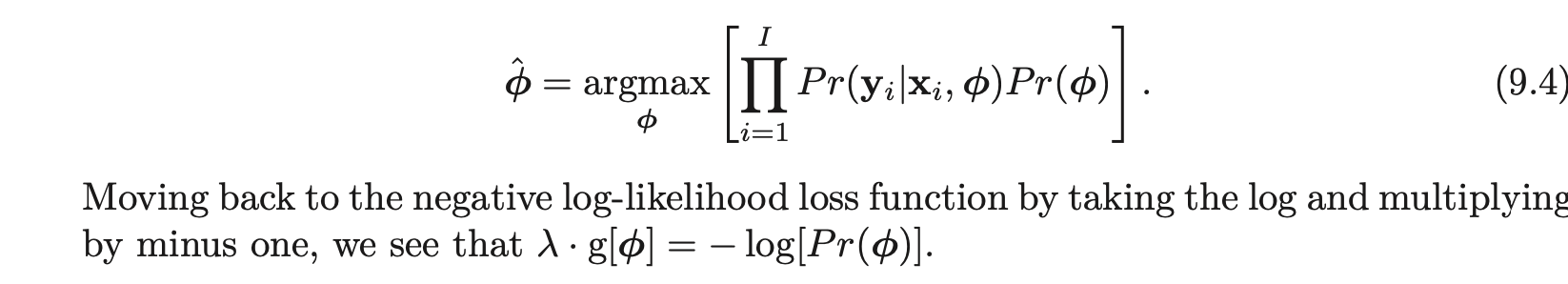

Skeptic : Mixup Skeptic: How does this tie into Optimizers Skeptic : Label Smoothing : Mentioned later Batch Normalization - The idea is to normalize the inputs of each layer in such a way that, they have a mean activation output zero and a unit standard deviation. Skeptic : AdamW