Strided Attention

- paper

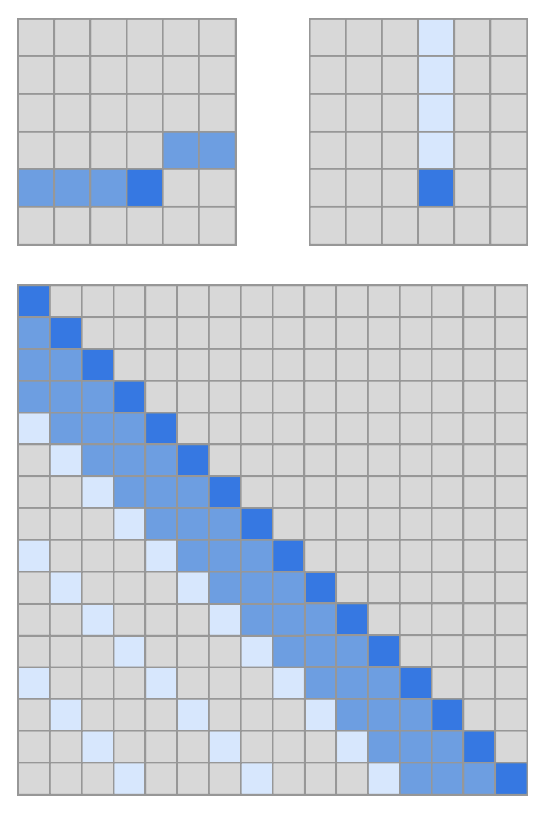

- Sparse factorizations of the Attention matrix

- Reduce to

- Recompute Attention matrices to save memory

- Fast Attention kernels

- Works nicely for images, music etc with a periodic structure

- Otherwise with the Strided pattern , the spatial coordinates do not correlate with the positions the elements might be more relevant in the future