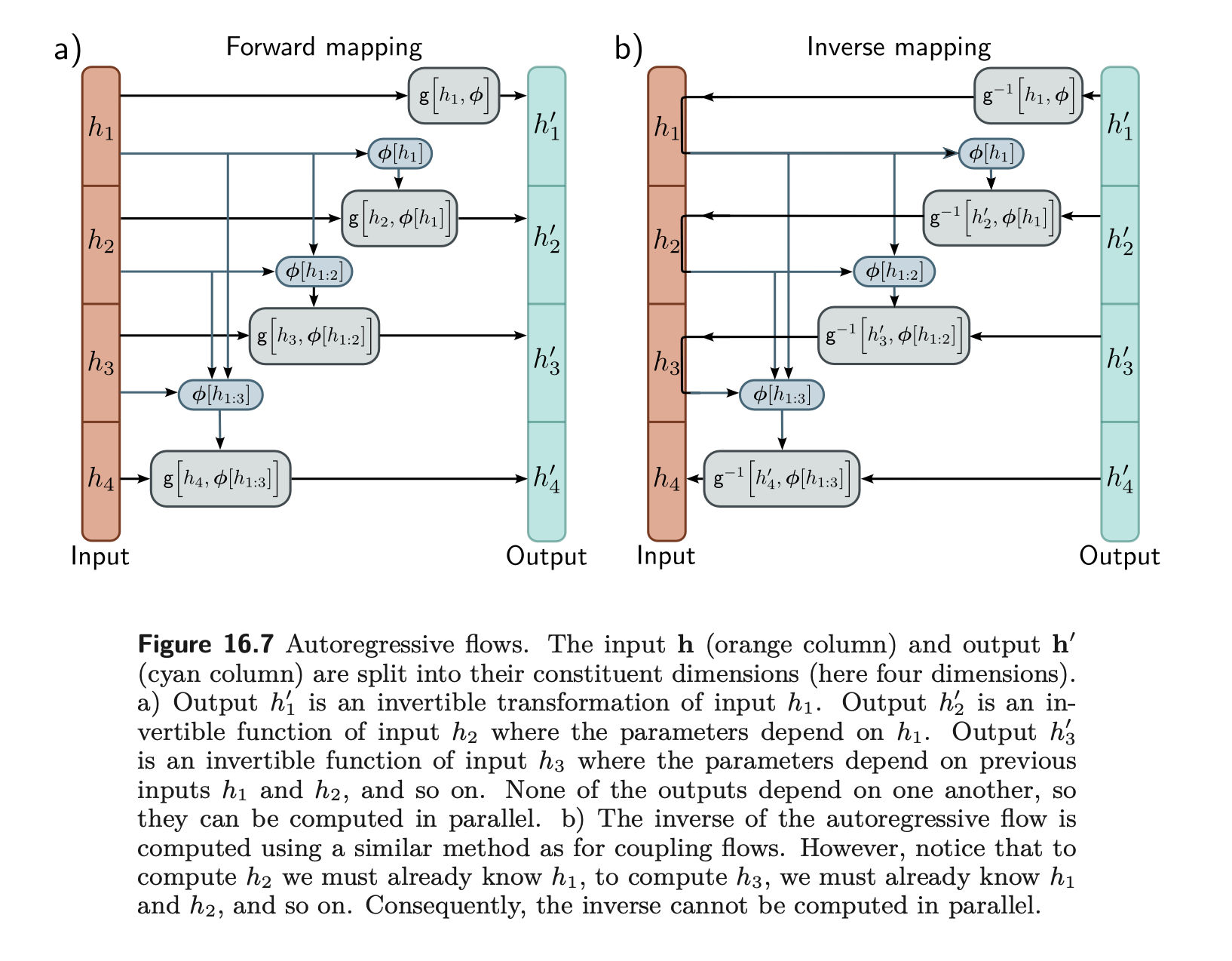

Autoregressive Flows

- use them as normalizing flows

- a model that sequentially samples each pixel on an image, wrt the previous pixel in a fixed order

- consider the conditional densities as gaussians

- From MADE - Masked autoencoder for distribution estimation and Masked autoregressive flow for density estimation,

- the sampling noise at each step can be used as a latent variable

- and

- the latent factor =

- observed →

- Since these are sequentially sampled, we can’t parallelize them. Instead from Improved variational inference with inverse autoregressive flows we can use inverse autoregressive flows

- From MADE - Masked autoencoder for distribution estimation and Masked autoregressive flow for density estimation,