LIME

- @ribeiroWhyShouldTrust2016

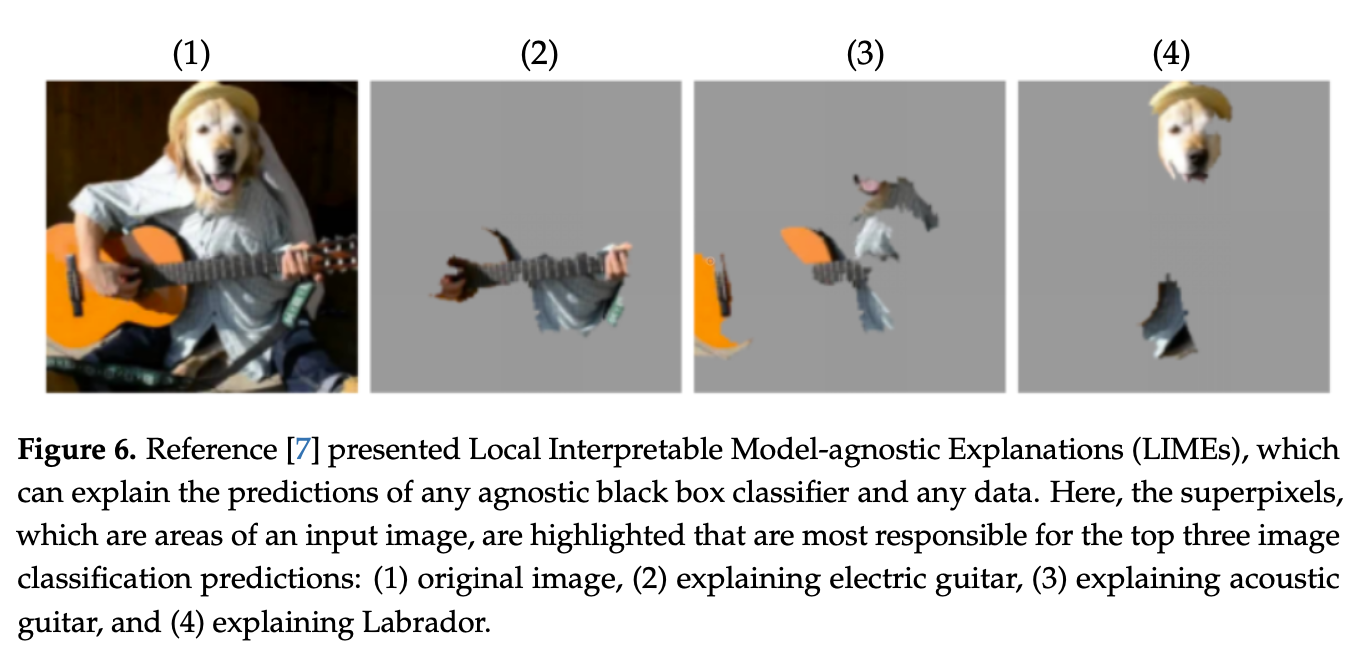

- novel model-agnostic modular and extensible explanation technique that explains the predictions of any classifier in an interpretable and faithful manner

- learning an interpretable model locally around the prediction

- SP-LIME

- method to explain models by selecting representative individual predictions and their explanations in a non-redundant way, framing the task as a submodular optimization problem and providing a global view of the model to users

- flexibility of these methods by explaining different models for text (e.g random forests) and image classification (e.g neural networks)

- usefulness of explanations is shown via novel experiments, both simulated and with human subjects