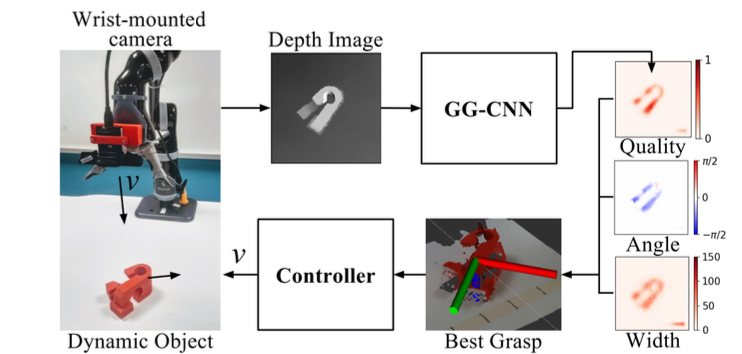

GGCNN

- Learning an object agnostic function to grasp objects

- Grasping using uni-modal data (depth image).

- Generate pixel-wise grasp configuration for the given input.

- The Gripper approaches the target object in top-down manner. Uses a shallow network, and an eye-in-hand camera configuration.

- Morrison, Douglas, Peter Corke, and Jürgen Leitner. “Closing the loop for robotic grasping: A real-time, generative grasp synthesis approach.” RSS (2018).