Bayesian Model Estimation

Unlike Frequentist , sometimes things like sample mean is not a good metric because it has a high variance. Might give different results with different trials in a real valued distribution

The task is to estimate θ

Bayesian Prior Now there are two sources of info about the true distribution p X ( θ )

The likelihood p ⊗ i x ( D ∣ θ ) θ

Prior plausibility in h ( θ )

Since these are independant sources we can combine them by multiplication: p ⊗ i x ( D ∣ θ ) h ( θ )

Advantages

If priors are well chosen → Better than frequentists with small sample sizes

Disadvantages

Integrating over millions of params and performing multiple preds for each param → infeasible

How to encode or represent Bayesian Posterior as very high dim

No closed form representation over weights

Represent data with histograms and use Monte Carlo

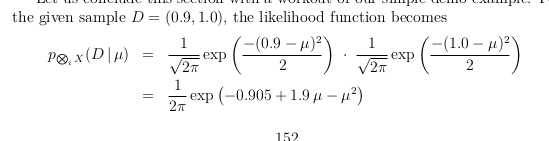

Example

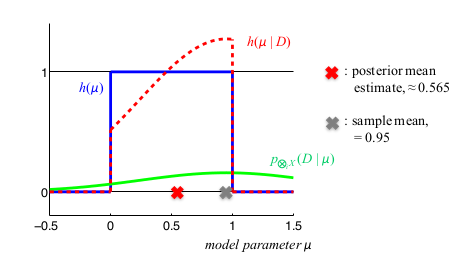

Green : prior , Red: Posterior

The Posterior Mean estimate is obtained by integrating ∫ R μ h ( μ ∣ D ) d μ

Since this is different from sample mean → Prior distribution really does influence the models