ADVENT

- blog

- paper

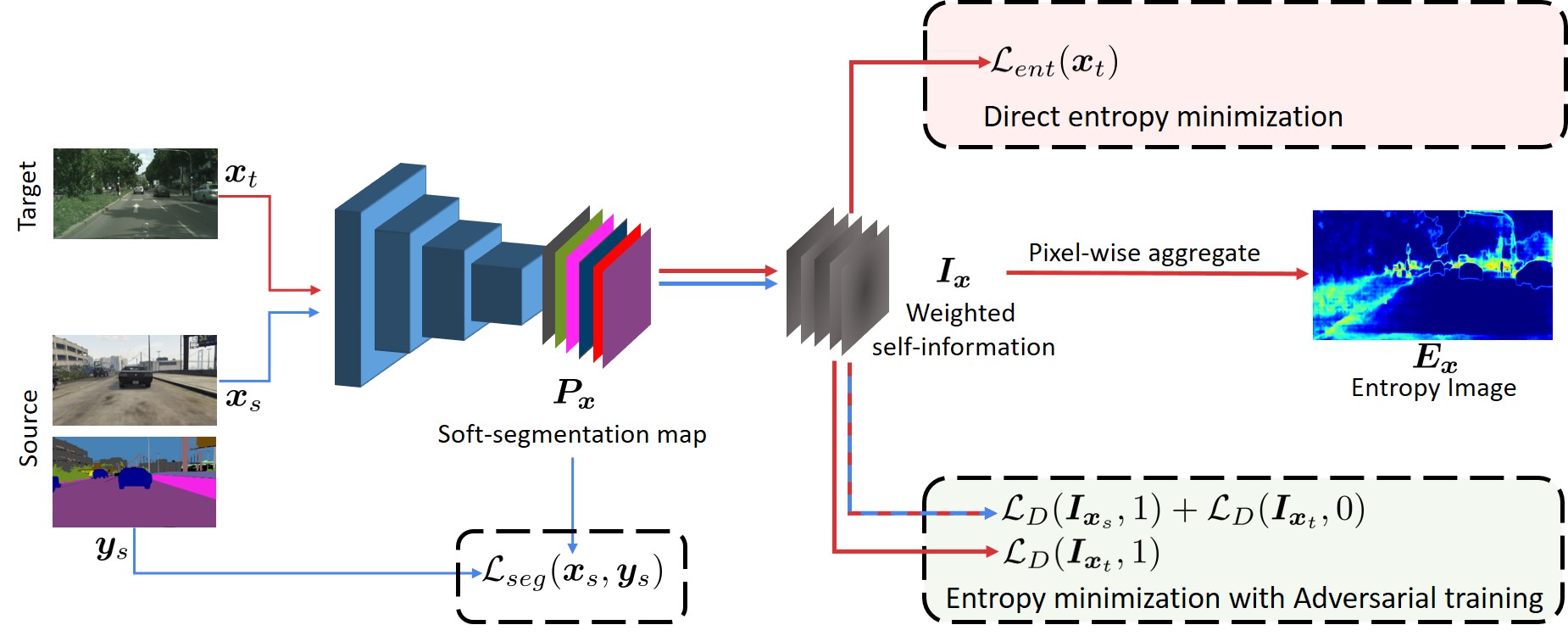

- ADVENT is a flexible technique for bridging the gap between two different domains through Entropy minimization

- models trained only on source domain tend to produce over-confident, i.e., low-Entropy, predictions on source-like images and under-confident, i.e., high-Entropy, predictions on target-like ones

- Consequently by minimizing the Entropy on the target domain, we make the feature distributions from the two domains more similar.

- More annotated data has been shown to always improve performance of DNNs

- Here we are working on Unsupervised DA (UDA), which is a more challenging task where we have access to labeled source samples and only unlabeled target samples. We use as source, data generated by a simulator or video game engine, while for target we consider real-data from car-mounted cameras.

- The main approaches for UDA include discrepancy minimization between source and target feature distributions usually achieved via adversarial training ganin2015unsupervised, tzeng2017adversarial, self-training with pseudo-labels zou2018unsupervised and generative approaches hoffman2018cycada, wu2018dcan.

- We present our two proposed approaches for Entropy minimization using (i) an unsupervised Entropy loss and (ii) adversarial training. To build our models, we start from existing semantic segmentation frameworks and add an additional network branch used for domain adaptation.

- Direct entropy minimization

- Entropy minimization by adverarial learning

- GTA5

- SYNTHIA

- Cityscapes